AI Doesn't Wait. Do You?

What the unstoppable advance of artificial intelligence teaches us about the fear of growing.

Just ten years ago, artificial intelligence was a concept from science fiction films. Today it generates images, writes code, diagnoses diseases, and holds conversations nearly indistinguishable from human ones. It didn't ask for permission. It didn't wait for the world to be ready. It moved forward.

And that, curiously, terrifies us.

There is something about the speed of AI that deeply unsettles us: its indifference to uncertainty. It doesn't know if the result will be perfect. It doesn't know if the world will accept it. It simply processes, adjusts, learns, and tries again. Iteration after iteration, without existential paralysis.

"The problem isn't that AI is advancing too fast. The problem is that we are advancing too slowly."

Fear has a name: perfectly prepared to never start

How many times have we waited for the perfect moment. The finished idea. The bulletproof plan. The sufficient skill set. We convince ourselves that if we wait just a little longer, we'll be ready. And meanwhile, time passes.

The MVP that's ready but unlaunched because "it still needs something." The disruptive idea that exists only in our heads and that we haven't even shared with anyone, out of fear of ridicule or theft. The project sitting in a notes document, unopened for months. Ideas we never let breathe because protecting them feels safer than exposing them.

AI doesn't work that way. Every model goes out into the world incomplete, with errors, with biases. And precisely because it goes out, it receives real feedback. That feedback is what makes it better. The next model no longer makes the same mistakes: it absorbed them as learning.

We, on the other hand, protect our ideas so thoroughly from contact with reality that they never get to grow.

Moving forward doesn't mean being right. It means being in motion.

There is a very common confusion: we believe that moving forward means having the correct answer. That if we move without certainty, the result will be failure. But what AI shows us is that progress is neither linear nor guaranteed — it is a process of constant readjustment.

Earlier models hallucinated frequently: they fabricated data, cited nonexistent sources, answered with a confidence they didn't have. They had limited context, reasoned poorly on complex problems, and failed at tasks that any current model handles effortlessly. Those weren't defects that stopped the advance. They were the necessary price of going out into the world and receiving the feedback that the following models needed in order to improve.

Your current version can also be necessary, even if it isn't perfect.

"Growing isn't about reaching a final version of yourself. It's about committing to the process of constant updating."

The real danger of AI and what it reveals about us

Yes, there are real risks in the advance of artificial intelligence. But the most immediate one isn't the one that appears in movies. It's this: today, in many contexts, AI is already being chosen over people. Not because it doesn't make mistakes — it still hallucinates, it still fails — but because it delivers results that are good enough, at a speed and scale that humans cannot match alone. The danger isn't the robot of the future. It's the tool of today that is already competing with us in the present.

And here is where the question becomes personal: what makes you the chosen one?

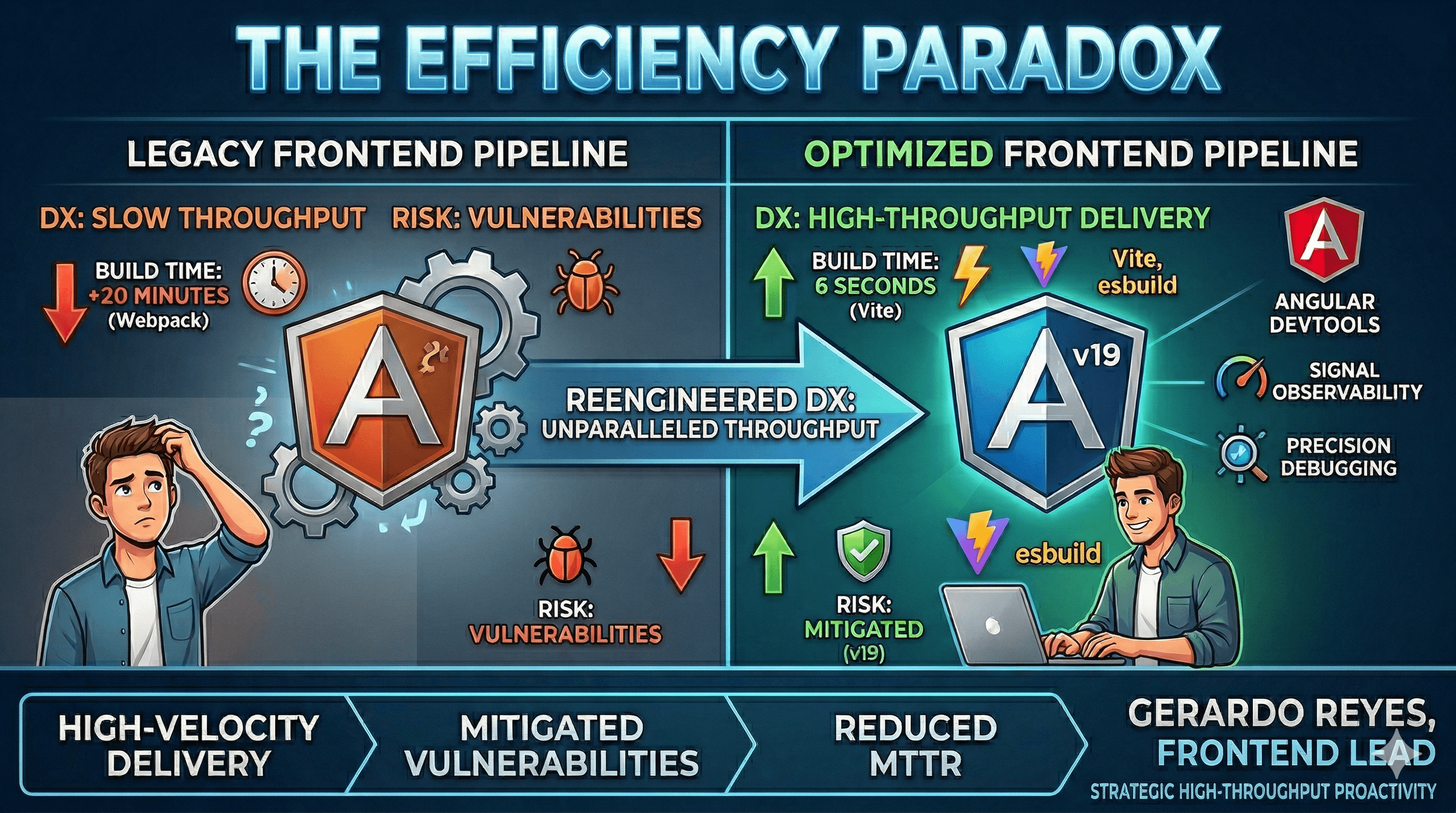

There is a kind of dangerousness worth cultivating. Not that of someone who never makes mistakes, but that of someone who iterates so fast that their mistakes become an advantage. Imagine two people with the same goal and the same amount of time — two months. One waits, perfects, tends to every detail before taking the first step. The other launches, receives feedback, analyzes it, incorporates the improvement, and launches again. At the end of those two months, the first has one nearly perfect iteration. The second has ten, each more refined than the last, and ten layers of real accumulated learning that the first simply doesn't have.

The difference isn't talent or available time. It's the commitment to the complete cycle: launch, listen, analyze, improve, repeat. Whoever adopts that rhythm becomes someone difficult to catch — not because they're unreachable, but because they never stop moving. That's what makes AI dangerous. And it's exactly what can make you dangerous too.

The only algorithm that matters

AI has a cycle: action → result → adjustment → new action. We have access to the same cycle, but with something it doesn't have: fear and will. Fear shows up always, in everyone. Will is what determines what you do with it — whether you turn it into a brake that stops you or a signal that tells you something matters enough to try. Whoever develops that will doesn't eliminate fear, they learn to use it.

As Samuel Beckett would say: Ever tried. Ever failed. No matter. Try again. Fail again. Fail better.

Did something here resonate with you? Share it with someone who is also waiting for the perfect moment.